February 2026 has been a major month for AI model releases, too many to count but we have been able to test a few and review some which are upcoming. With seven major model releases in a single month, Google, Anthropic, OpenAI, xAI, and Alibaba all dropped significant updates within weeks of each other, with benchmark records being broken again and frontier performance continuing on all fronts. If you are trying to work out what any of it means for your business, your workflows, or your budget, this is the breakdown you actually need.

We have been testing these models, digging into the benchmarks, and working our way through which ones we will be using moving forwards into 2026.

Gemini 3.1 Pro put Google back at the top of the benchmark charts for the first time in a while. Claude Sonnet 4.6 gave Anthropic a model that performs at near-Opus level but at the Sonnet pricing level. Grok 4.20 introduced a genuinely new architecture four AI agents running in parallel. And Qwen 3.5 from Alibaba continues to show that the open-source models can close the gap faster than most expected.

As AI models like Gemini, Claude and GPT become more powerful, many businesses are now exploring how they can use them for automation, customer support and internal workflows. At Design for Online® we help companies implement these tools through AI automation services, integrating large language models with CRMs, email systems and business applications.

If you are an agency, a developer, or a business deploying AI across multiple workflows, the choice of model has never mattered more, or been harder to make.

The main models that dropped in February 2026

| Model | Released | Developer | Type |

|---|---|---|---|

| Gemini 3.1 Pro | Feb 19 | Google DeepMind | Proprietary |

| Claude Opus 4.6 | Feb 4 | Anthropic | Proprietary |

| Claude Sonnet 4.6 | Feb 17 | Anthropic | Proprietary |

| GPT-5.3 Codex | Feb 5 | OpenAI | Proprietary |

| Grok 4.20 | Feb 17 | xAI | Proprietary |

| Qwen 3.5 | Feb 2026 | Alibaba | Open-weight |

A Quick Note on Subscriptions vs API Costs

Before we get into the models, this is worth clarifying because it trips up a lot of people.

Consumer subscriptions (Claude Pro at around £17/month, ChatGPT Plus at around £16/month, Gemini Advanced from around £18.99/month) give you access to the models through the chat interface with generous usage limits. They are great for individuals using AI day to day.

API costs are entirely separate. If you are building tools, automations, or integrating AI into your own products and workflows, you pay per token regardless of whether you also hold a subscription. A Claude Pro subscription does not reduce your API bill. These are two different billing relationships with the same company.

For most agencies and developers, both make sense: a subscription for everyday use, and API access budgeted separately for production systems.

How businesses are using these AI models

Many of the models listed below are already being used by businesses to automate marketing, support and operations. Choosing the right model is only part of the challenge. integrating it properly into your business systems is where the real value comes from. Our AI consulting services help companies choose the right AI stack and deploy automation safely and effectively.

1. Gemini 3.1 Pro

Google were a little quiet recently after launching numerous, large language, image and video models a little while ago (in AI terms), but Gemini 3.1 Pro changes that.

Released 19 February, it posted leading scores on 13 of 16 benchmarks. The headline number is 77.1% on ARC-AGI-2, a test of pure logic and novel problem-solving that models cannot memorise their way through. That is more than double Gemini 3 Pro’s score. On GPQA Diamond (expert-level scientific knowledge), it hit 94.3%, ahead of both Claude Opus 4.6 and GPT-5.2.

For agentic work, multi-step reasoning, and large-context tasks, this is the strongest general-purpose model available right now. Google also kept the pricing identical to Gemini 3 Pro, so you are getting a major upgrade at no extra cost.

Models like Gemini 3.1 and Claude Sonnet 4.6are now capable of analysing large datasets and generating highly optimised content. Businesses are increasingly using these capabilities to improve their online visibility through AI-assisted SEO strategies. Our SEO services help businesses use AI tools to analyse search data, optimise content and improve rankings in Google.

Best for: Developers and agencies building agentic systems who need top benchmark performance at the best price.

2. Claude Opus 4.6

Claude Opus 4.6 is Anthropic’s flagship. While Gemini 3.1 Pro leads on raw benchmark breadth, Opus holds its own where it matters for real professional work.

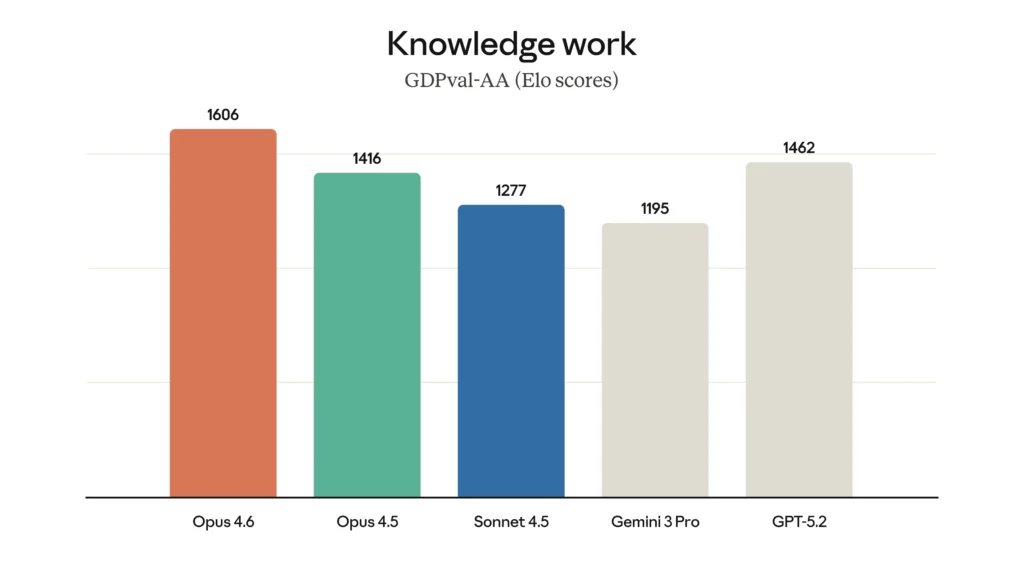

It scored 68.8% on ARC-AGI-2 and leads the GDPval-AA human preference leaderboard with 1,606 Elo versus Gemini’s 1,317. That gap reflects something benchmarks do not always capture: the quality of Claude’s output on expert tasks. Legal analysis, complex editorial, nuanced strategic writing, humans consistently prefer what Opus produces.

On agentic coding, it scored 80.8% on SWE-Bench Verified, the highest of any model on this benchmark.

Best for: High-stakes professional work and precision coding where output quality matters more than cost.

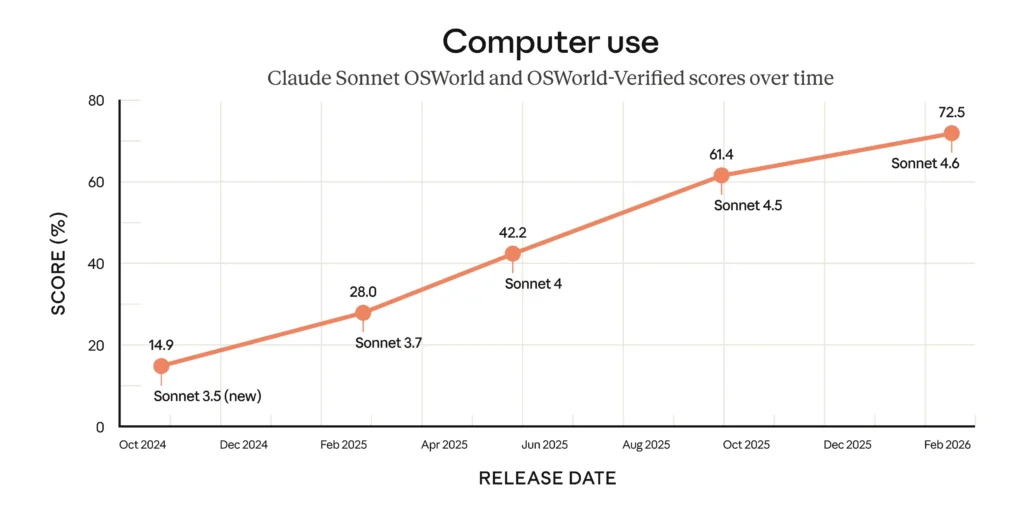

3. Claude Sonnet 4.6

This is the release we are most excited about, and the one we have shifted to as our default across various work we do.

Anthropic positioned Sonnet 4.6 as delivering near-Opus performance at Sonnet pricing. In Claude Code testing, users preferred it over the previous Sonnet 70% of the time. On the GDPval-AA Elo benchmark, which measures real expert-level office work, Sonnet 4.6 actually leads the entire field with 1,633 points, above Opus 4.6 and Gemini 3.1 Pro.

It ships with a 1 million token context window in beta at unchanged pricing. GitHub Copilot’s coding agent runs on it. It is the default on Claude.ai’s free and pro plans. Those are deliberate signals about where Anthropic sees it in production.

Best for: Agency workflows, content pipelines, AI-assisted development, sustained agentic work. The best balance of capability, context, and cost in the market right now.

4. GPT-5.3 Codex

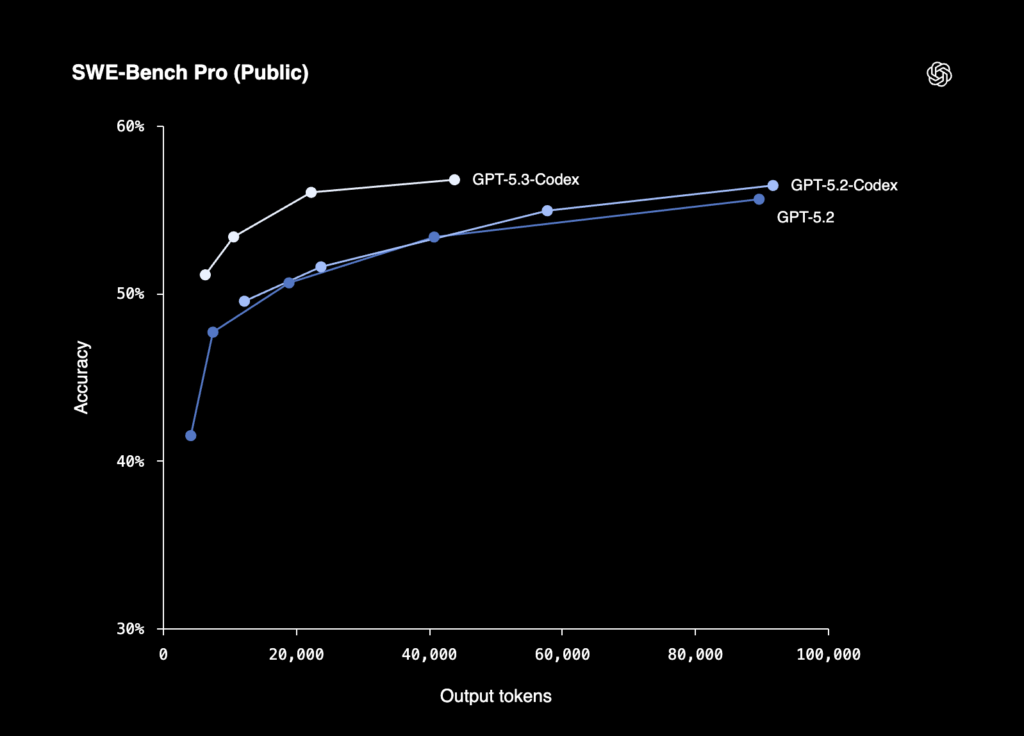

GPT-5.3 Codex is not a general-purpose model. It is built specifically for agentic coding and software development, and it excels at those tasks.

The benchmark numbers are focused: 77.3% on Terminal-Bench 2.0 (leading the category) and 56.8% on SWE-Bench Pro, a harder multi-language version of the standard benchmark. It achieves these scores using fewer tokens than any prior model, which matters at volume.

The practical limitation right now is that API pricing has not been officially published, with access through ChatGPT subscriptions while rollout continues. Based on the GPT-5 Codex lineage, expect approximately $1.25/$10 per million tokens when it arrives.

Best for: Software teams needing a specialist coding agent for terminal-based and multi-language engineering work.

5. Grok 4.20

Grok 4.20 is the most architecturally interesting release of the month.

xAI has shipped a really different approach: four specialised AI agents running in parallel on every complex query. Grok coordinates, Harper handles fact-checking and real-time X data, Benjamin covers logic and coding, and Lucas handles creative reasoning. They debate each other in real time before producing a single answer, and it is built into the inference layer rather than being a user-orchestrated framework.

In Alpha Arena, where AI models are given real capital to trade live markets, Grok 4.20 was the only profitable model, with four variants in the top six spots. Its provisional Arena Elo sits at 1,505-1,535, up from Grok 4.1’s 1,483.

The current release is a 500B parameter “small” variant. The full model has not finished training, and API access is still coming soon. Access for now is via SuperGrok subscription at $30/month.

Best for: Power users and researchers comfortable being on the bleeding edge. When the full model and API arrive in Q2, this could be a genuine step change.

Qwen 3.5

Qwen 3.5 from Alibaba competes on economics rather than benchmark position.

At $0.40/$1.20 per million tokens, it costs a fraction of the Western frontier models. It supports 201 languages, ships with a 1 million token context window, handles multimodal inputs, and can be self-hosted under Apache 2.0 licensing.

There are considerations for UK and European businesses though, especially in enterprise, financial or government functions

Data residency: The Alibaba Cloud API routes data through Singapore by default, which raises GDPR questions for client work.

Government access concerns: Under Chinese law, data processed by Chinese companies may be accessible to Chinese authorities. This is the same concern driving restrictions on DeepSeek across UK and EU enterprise contexts. No enterprise SLA: No SOC 2, HIPAA, or ISO compliance certifications via the standard API.

The mitigation is self-hosting. Under Apache 2.0, you can run Qwen 3.5 on your own infrastructure and eliminate the data sovereignty issue entirely. At that point, the economics become very compelling for high-volume internal tasks.

Best for: Cost-sensitive and high-volume applications. Fine for internal use. Approach with caution for client data unless self-hosted.

Head-to-Head Benchmark Comparison

| Benchmark | Gemini 3.1 Pro | Claude Opus 4.6 | Claude Sonnet 4.6 | GPT-5.3 Codex | Grok 4.20 | Qwen 3.5 |

|---|---|---|---|---|---|---|

| ARC-AGI-2 Novel reasoning puzzles — can’t be memorised | 77.1% | 68.8% | 60.4% | 52.9% | ~16%† | 12% |

| GPQA Diamond PhD-level science questions | 94.3% | 91.3% | 89.9% | 92.4% | ~88%† | 88.4% |

| SWE-Bench Verified Real GitHub issues in production code | 80.6% | 80.8% | 79.6% | — | ~72–75%† | 76.4% |

| Terminal-Bench 2.0 Autonomous terminal & DevOps tasks | 68.5% | 65.4% | 59.1% | 77.3% | — | — |

| Humanity’s Last Exam 2,500 expert academic questions, with tools | 51.4% | 53.1% | 49.0% | — | ~41%† | 28.7% |

| GDPval-AA Elo Office & knowledge work, human-rated | 1,317 | 1,606 | 1,633 | ~1,580* | — | — |

| Arena Elo Live trading with real capital | — | — | — | — | 1,505–1,535 | — |

| Context Window Max text/code processed in one prompt | 1M tokens | 200K (1M beta) | 1M tokens (beta) | 400K | 256K–2M | 1M tokens |

*Estimated or based on early tester consensus | †Grok 4.20 official benchmarks pending (expected March 2026); figures based on Grok 4 baseline — Grok 4.20 is expected to exceed these

Pricing: Subscriptions and API Costs

Consumer subscription plans (chat interface access):

| Model | Standard Plan | Pro/Power Plan |

|---|---|---|

| Gemini 3.1 Pro | Gemini Advanced ~£18.99/mo | — |

| Claude Sonnet 4.6 | Claude Pro ~£17/mo | Claude Max ~£85/mo |

| Claude Opus 4.6 | Claude Pro ~£17/mo | Claude Max ~£85/mo |

| GPT-5.3 Codex | ChatGPT Plus ~£16/mo | ChatGPT Pro ~£160/mo |

| Grok 4.20 | SuperGrok ~$30/mo | SuperGrok Heavy $300/mo |

| Qwen 3.5 | Free tier available | — |

API costs are separate and charged per token regardless of subscription (per 1 million tokens, Input / Output):

| Model | Input | Output | API Status |

|---|---|---|---|

| Gemini 3.1 Pro | $2.00 | $12.00 | Live |

| Claude Opus 4.6 | $5.00 | $25.00 | Live |

| Claude Sonnet 4.6 | $3.00 | $15.00 | Live |

| GPT-5.3 Codex | ~$1.25* | ~$10.00* | Rolling out |

| Grok 4.20 | TBC | TBC | Coming soon |

| Qwen 3.5 | $0.40 | $1.20 | Live |

*Estimated based on GPT-5 Codex lineage; official API pricing not yet published

Real-World Monthly API Cost Estimates

Based on a 70/30 input/output split. These figures reflect realistic usage patterns rather than the hyperscale numbers often used in comparisons.

Light Use — 5 million tokens/month

(Solo developer, freelancer, single AI integration)

| Model | Monthly API Cost |

|---|---|

| Qwen 3.5 | $3.20 |

| GPT-5.3 Codex (est.) | $19.38 |

| Gemini 3.1 Pro | $25.00 |

| Claude Sonnet 4.6 | $33.00 |

| Claude Opus 4.6 | $55.00 |

| Grok 4.20 | TBC |

At this level, a subscription plan often works out better value than pay-as-you-go API costs for day-to-day use.

Agency Use — 25 million tokens/month

(Multiple client workflows, regular automation, content pipelines)

| Model | Monthly API Cost |

|---|---|

| Qwen 3.5 | $16.00 |

| GPT-5.3 Codex (est.) | $96.88 |

| Gemini 3.1 Pro | $125.00 |

| Claude Sonnet 4.6 | $165.00 |

| Claude Opus 4.6 | $275.00 |

| Grok 4.20 | TBC |

At agency scale, Gemini’s context caching (up to 75% off repeated content) and Claude’s batch API (50% off non-urgent tasks) can bring these figures down significantly.

Our Verdict

Benchmark winner: Gemini 3.1 Pro. On raw scores across the most tests, Google has reclaimed the top spot. At $2/$12 per million tokens it also offers the best value of any frontier model right now.

Our default for agency and professional work: Claude Sonnet 4.6. The quality of output at this price point has genuinely crossed a threshold with this release. It leads on real expert-level work, has the 1M context window, and is reliable enough in production to trust across a wide range of client tasks. That is why it is our go-to.

One to watch: Grok 4.20. The multi-agent architecture is a genuinely different approach, not just a bigger model. The full version is still in training and the API is not open yet, but when both land in Q2 this could shift the rankings significantly.

Specialist pick: GPT-5.3 Codex. If you run software development workflows with terminal-heavy tasks, it belongs in your toolkit. Worth waiting for official API pricing before committing.

Use with care: Qwen 3.5. Extraordinary economics, real data considerations for client work unless you are self-hosting.

The headline of 2026 so far is not that one model has won. It is that models are beginning to diverge, and picking the right one for the right task matters more than loyalty to a single provider.

Quick Reference: Which Model for What

| Task | Best Choice | Runner Up |

|---|---|---|

| Complex reasoning and science | Gemini 3.1 Pro | Claude Opus 4.6 |

| Agency content and client work | Claude Sonnet 4.6 | Gemini 3.1 Pro |

| Expert-level professional tasks | Claude Opus 4.6 | Claude Sonnet 4.6 |

| Agentic coding and dev workflows | GPT-5.3 Codex | Claude Sonnet 4.6 |

| Multi-agent complex reasoning | Grok 4.20 | Claude Opus 4.6 |

| High-volume / cost-sensitive | Qwen 3.5 (self-hosted) | Gemini 3.1 Pro |

| Real-time data and financial tasks | Grok 4.20 | Gemini 3.1 Pro |

The AI models listed above are powerful tools, but deploying them effectively requires the right strategy and integrations.

Businesses we work with are already using AI for customer support automation, marketing and SEO optimisation, AI agents connected to CRMs and internal tools.

About Design for Online®: We are a full service digital marketing agency in Bury St Edmunds, Suffolk. Our services include web design, SEO, PPC, AI business automation, photography and videography. We work with businesses across the UK to help them grow online.

For enquiries: hello@designforonline.com